Hancheng Min

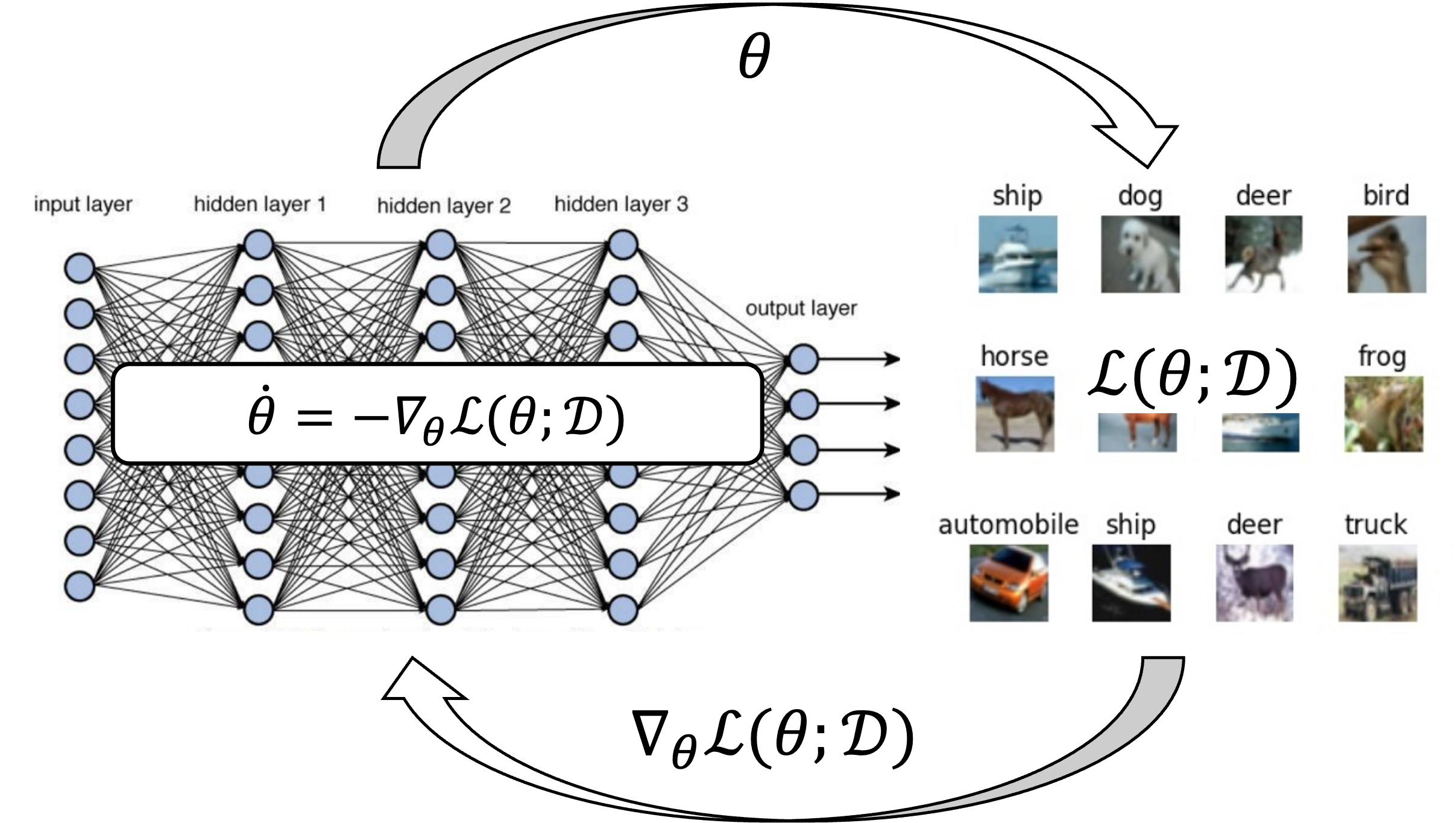

I am a Tenure-track Associate Professor at the Institute of Natural Sciences (INS) and the School of Mathematics (SMS), Shanghai Jiao Tong Univeristy. My research centers around building mathematical principles that facilitates the interplay between machine learning and dynamical systems. Recently, I am mainly interested in analyzing gradient-based optimization algorithms on overparametrized neural networks from a dynamical system perspective.

Recent Updates

[May, 31, 2026] I gave a talk Slow Coherency, Aggregation and Clustering in Networked Systems at Green Control Workshop 2026 at Peking University

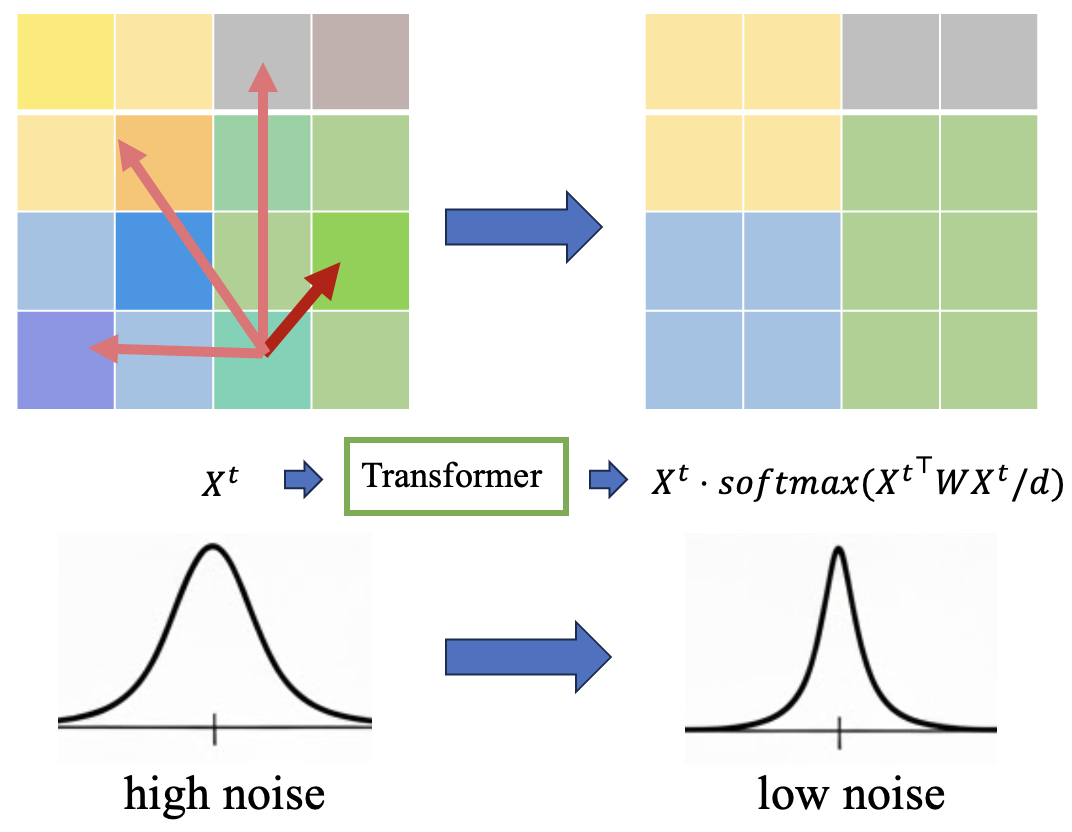

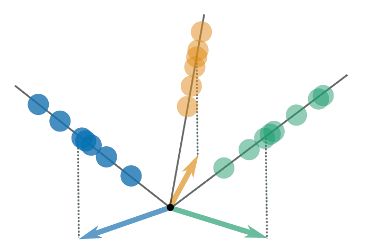

[May, 01, 2026] Our paper Transformers Learn the Optimal DDPM Denoiser for Multi-Token GMMs is accepted to ICML 2026 !

[Feb, 11, 2026] Our tutorial paper On the Convergence, Implicit Bias and Edge of Stability of Gradient Descent in Deep Learning has been accepted to IEEE Signal Processing Magazine !

[Dec, 10, 2025] I gave a talk Understanding Incremental Learning with Closed-form Solution to Gradient Flow on Overparamerterized Matrix Factorization at CDC 2025 at Rio

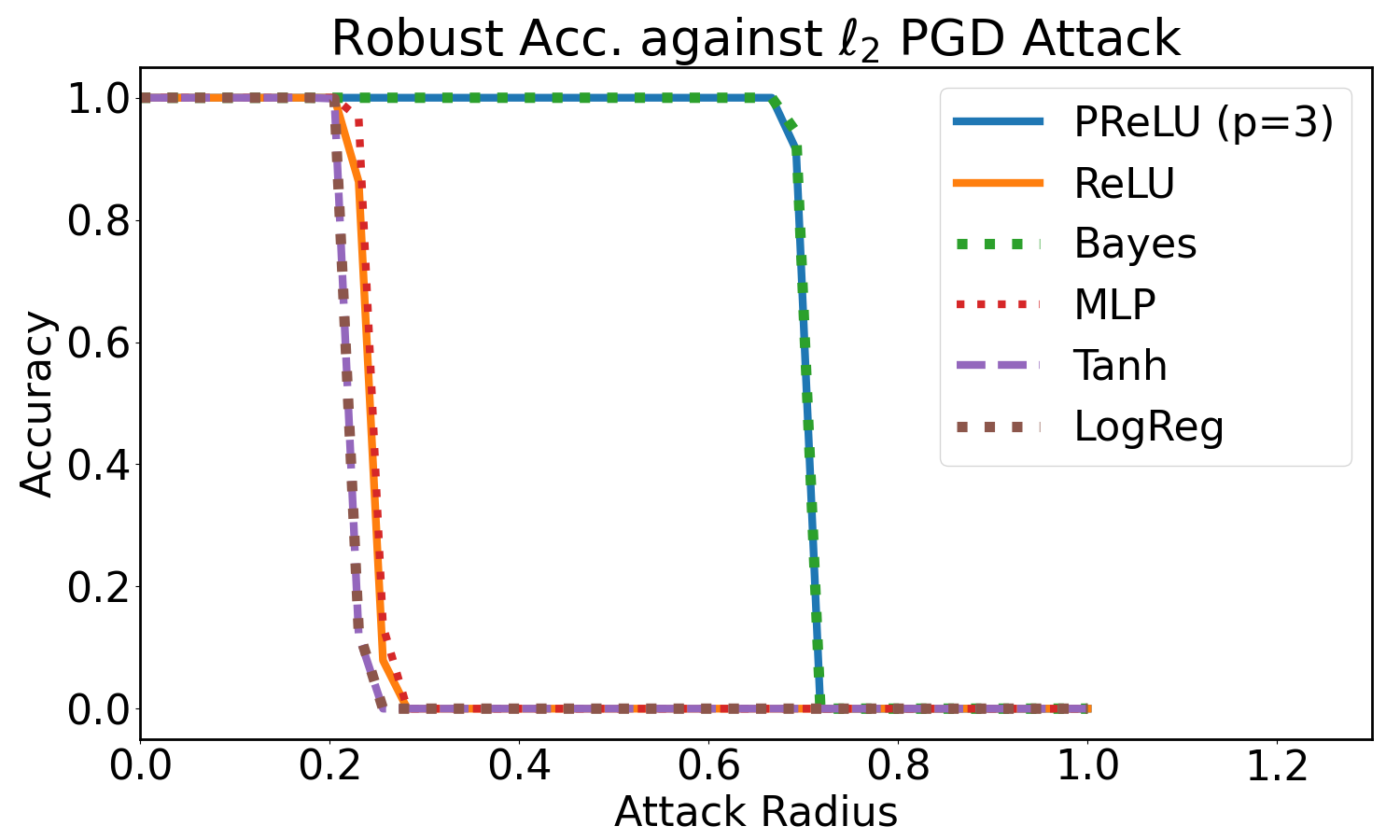

[Nov, 23, 2025] I gave a talk Learning Dynamics in the Feature Learning Regime: Implicit Bias, Neural Collapse, and Robustness at NYU, Shanghai

Recent Publications

- On the Convergence, Implicit Bias and Edge of Stability of Gradient Descent in Deep LearningIEEE Signal Processing Magazine (IEEE SPM), May 2026 PDFTo appear

Selected Publications

- On the Convergence, Implicit Bias and Edge of Stability of Gradient Descent in Deep LearningIEEE Signal Processing Magazine (IEEE SPM), May 2026 PDFTo appear